Sound Shapes

Wobl Bobl

Plantbot

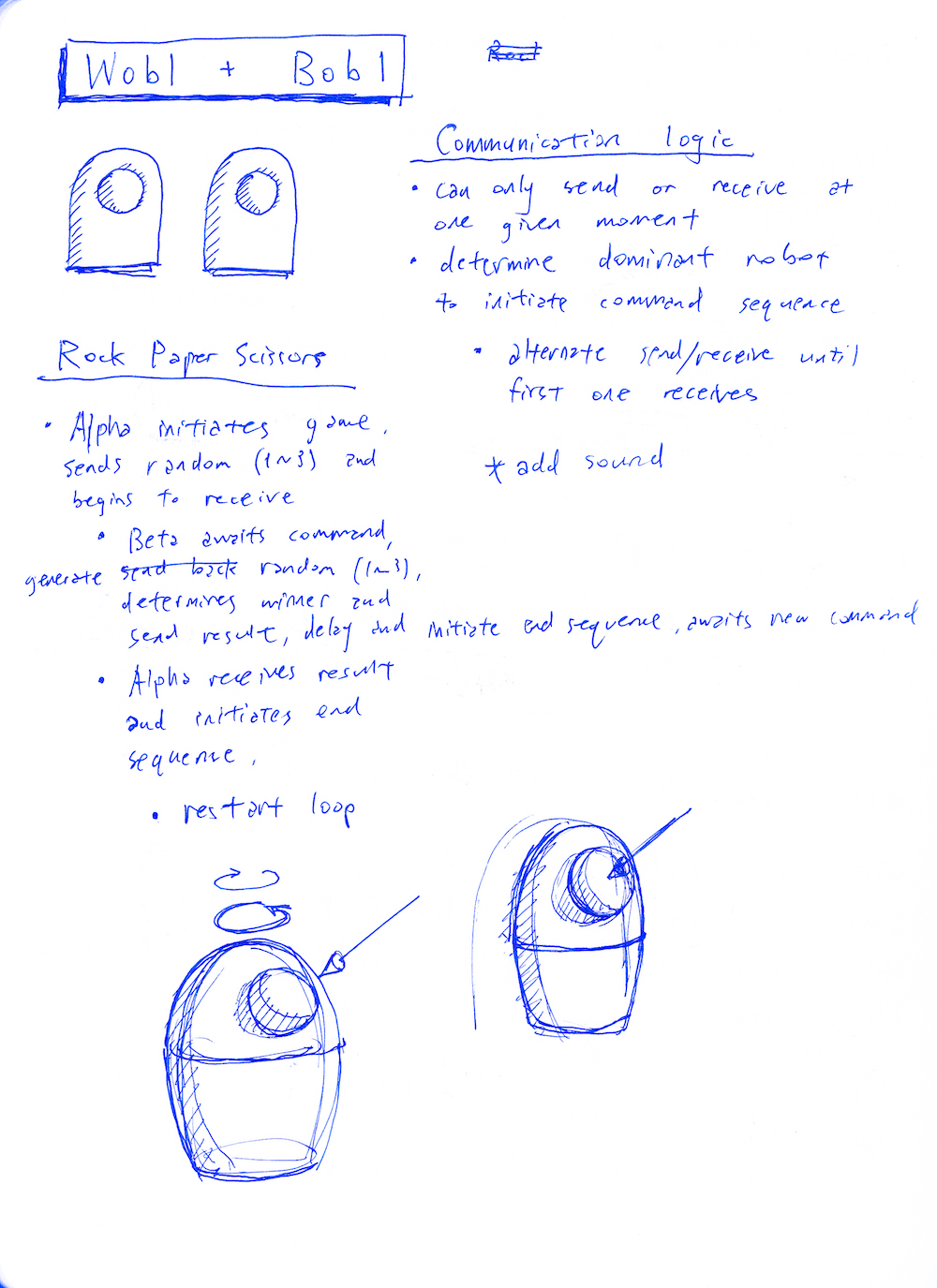

Wobl Bobl

x

A pair of robots that talk through infrared signals.

Inspired by swarm robots, I wanted to simulate a natural emergence of complexity from a simplistic program. By having a pair of robots speak together in their own robotics language, I hoped to create an impression of higher intellegence.

In order for the robots to communicate asynchronously, I needed to develop a communications protocol. I needed to map out the inputs, outputs, and design a communication protocol that would allow the two robots to contain the code to both send and receive signals. I've chosen to work with infrared transceivers, and I work my way step by step towards building a functional communication protocol.

I was able to salvage all the materials for the body from the design studios and Walgreens. There's a paper cup to hold the electronics, sculpture wire to add support, a ping pong ball for the head, and a fuzzy sock to package it all together.

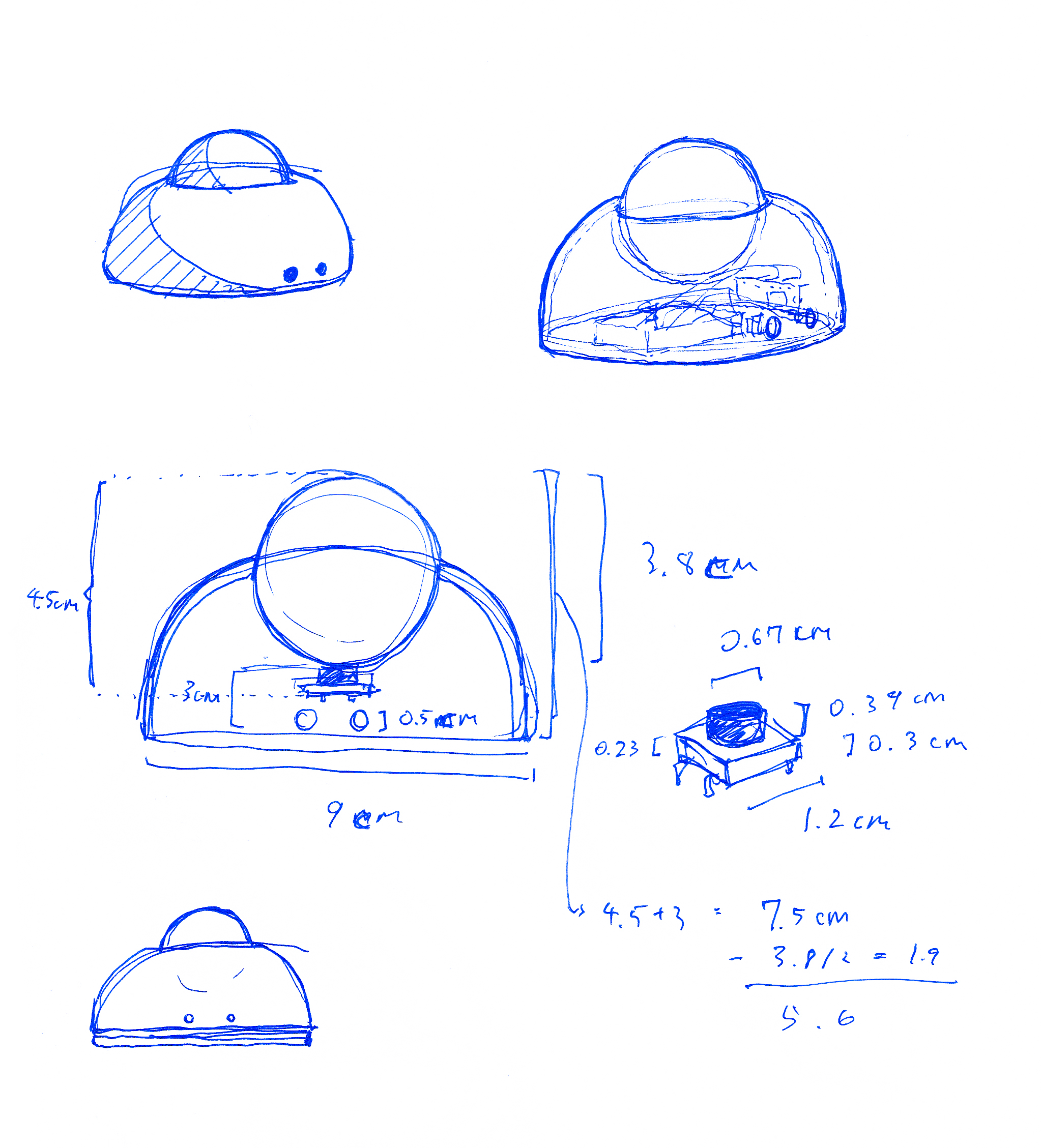

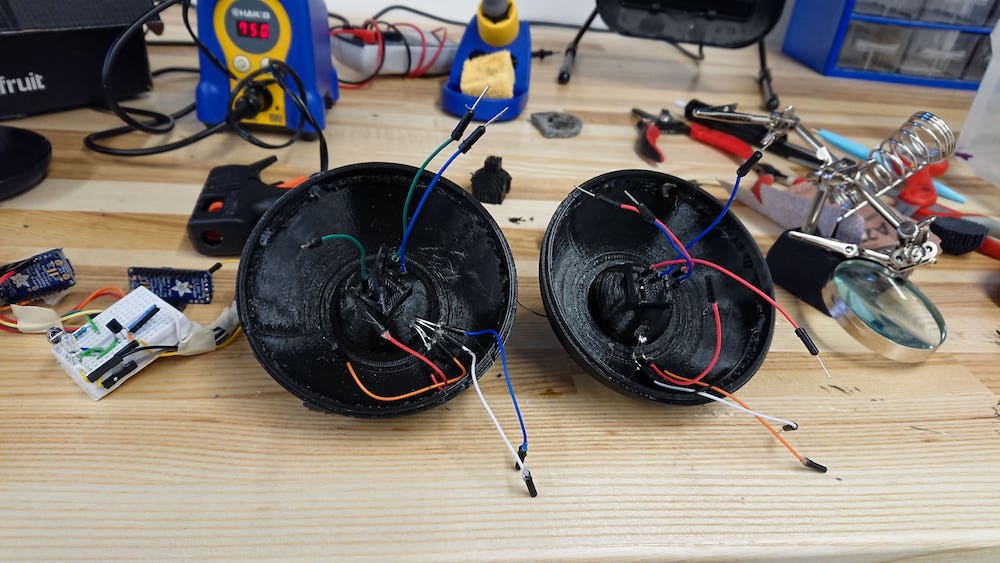

The soft body of the original robot was a little too fragile so I decided to create a hard-shelled robot instead. This allowed me to create a roboust body and standardize the construction.

After sketching out a variety of different forms for the outer shell, I chose one that I felt would be the most feasible to 3D print and house my components.

Sound Shapes

x

Creating a new tactile and interactive way to generate music.

While I was taking a music production class, the section covering waveforms and modulation drew my attention. Because electronic music isn't tied to physical instrument, I wondered if there could be a way to reintroduce the physical form into the the process of making electronic music. By utilizing shapes that resemebled the different waveforms, I could create a set of objects that modulate a soundwave in an interactive and exciting way.

For my first prototype I created four objects, representing a sine, square, triangle, and sawtooth wave. The shapes themselves didn't correspond with the waveforms like I would have liked, but this was a good starting point.

I wanted the blocks to be able communicate independently with a central control tower, which would then output the final modulated soundwave. This required embedding a RF emitter within each block, and have one receiver with a sound board. The challenge was to write a program that could read RF inputs simultaneously from multiple sources and modulate the soundwave in realtime.

With a MPU6050 accelerometer/gyro, Arduino Uno, and NRF24 transceiver. Sends the x-axis acceleration** over to the receiving end, which **maps that as an output tone played on the speaker. The x-axis acceleration modulates the volume/amplitude or the sound wave, and the z-axis gyroscopic velocity modulates the tone/frequency.

There hasn't really been a simple way to adjust volume in an arduino ( the hard way). How volume, or amplitude is traditionally adjusted is either with a analog or digital potentiometer that varies the current traveling to the speaker. The voltage, which determines the amplitude of the current, will be affectively how loud the sound being played is perceived. So arduinos use something called pulse width modulation (PWM) to simulate change in the output voltage. If you oscillate between 10v and 0v, where the 10v is being pulsed half the time, then the output voltage becomes treated like 5v. The frequency at which the arduino modulates the waveform, is imperceivable when used to control LEDs, but sonically, you'd be able to hear the periods in between the peaks. However, with the help of this library, it's able to speed up the timer so that the frequency is fast enough simulate different waveforms.

Being able to adjust the volume of the audio with one pin was already a miracle to begin with. Now what I've found, which I already deemed impossible, changes everything. This library Jon Thompson created essentially acts as a waveform generator. Almost everywhere I've looked, the forums said that it was impossible without a built in DAC like in the Arduino Due, or I had to make a Dac myself with some complex circuitry. The code takes advantage of high frequency PWM, like in the volume library, but uses it to control the sound wave, being able to output saw, sine, square, and triangular waves. This would mean that my initial goal of having different shapes modulate the waveform of the sound is actually be achievable.

Demo with z-rotation controlling volume and x-rotation controlling the pitch.

Using quaternions derived from the gyro readings, I was able to simulate the shapes virtually with Processing.

Plantbot

x

Ever wished that your plant could be more like a pet? The plantbot is a robo-suit that grants your plant sentience, and a bit more personality.

I started with the idea of creating a robot plant pot that would move towards the light and notify its owner when it's time for watering. The pots could be switched out and different progammed attributes and personalities could be determined by the plant variety.

The robot employs four light sensors to figure out which direction to move. It only rests once it's reached a spot with adequate lighting.

A surprisingly difficult task was figuring out how to play mp3 files from an SD card. Depending on the state of the robot, different audio clips would be triggered. By feeding two wires from the inside of the soil onto an outer railing, the moisture sensor would also detect when a plant pot has been inserted or removed.

ODDS AND ENDS